Dense 3D Semantic Mapping of Indoor Scenes from RGB-D Images

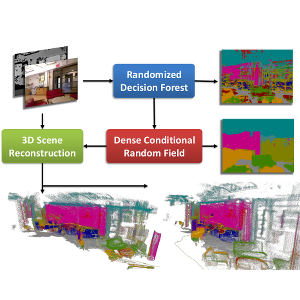

Dense semantic segmentation of 3D point clouds is a challenging task. Many approaches deal with 2D semantic segmentation and can obtain impressive results. With the availability of cheap RGB-D sensors the field of indoor semantic segmentation has seen a lot of progress. Still it remains unclear how to deal with 3D semantic segmentation in the best way. We propose a novel 2D-3D label transfer based on Bayesian updates and dense pairwise 3D Conditional Random Fields. This approach allows us to use 2D semantic segmentations to create a consistent 3D semantic reconstruction of indoor scenes. To this end, we also propose a fast 2D semantic segmentation approach based on Randomized Decision Forests. Furthermore, we show that it is not needed to obtain a semantic segmentation for every frame in a sequence in order to create accurate semantic 3D reconstructions. We evaluate our approach on both NYU Depth datasets and show that we can obtain a significant speed-up compared to other methods.

@inproceedings{Hermans14ICRA,

author = {Alexander Hermans and Georgios Floros and Bastian Leibe},

title = {{Dense 3D Semantic Mapping of Indoor Scenes from RGB-D Images}},

booktitle = {International Conference on Robotics and Automation},

year = {2014}

}