Welcome

Welcome to the Computer Vision Group at RWTH Aachen University!

The Computer Vision group has been established at RWTH Aachen University in context with the Cluster of Excellence "UMIC - Ultra High-Speed Mobile Information and Communication" and is associated with the Chair Computer Sciences 8 - Computer Graphics, Computer Vision, and Multimedia. The group focuses on computer vision applications for mobile devices and robotic or automotive platforms. Our main research areas are visual object recognition, tracking, self-localization, 3D reconstruction, and in particular combinations between those topics.

We offer lectures and seminars about computer vision and machine learning.

You can browse through all our publications and the projects we are working on.

Important information for the Wintersemester 2023/2024: Unfortunately the following lectures are not offered in this semester: a) Computer Vision 2 b) Advanced Machine Learning

News

| • |

WACV'26 Our paper "We Still See Broken Limbs: Towards Anatomical Realism in GenAI via Human Preference Learning" was accepted at the 5th Workshop on Image/Video/Audio Quality Assessment in Computer Vision, VLM and Diffusion Models at IEEE/CVF Winter Conference on Applications of Computer Vision 2026. See you in Tucson, Arizona! |

Jan. 23, 2026 |

| • |

ICCV'25 Our paper DONUT: A Decoder-Only Model for Trajectory Prediction was accepted at the 2025 International Conference on Computer Vision (ICCV)! Our project: Sa2VA-i: Improving Sa2VA Results with Consistent Training and Inference achieves 3rd Place of LSVOS Workshop, MeViS Track. |

Oct. 1, 2025 |

| • |

RO-MAN'25 Our paper How do Foundation Models Compare to Skeleton-Based Approaches for Gesture Recognition in Human-Robot Interaction? has been accepted! |

June 12, 2025 |

| • |

CVPR'25 We have two papers accepted at Conference on Computer Vision and Pattern Recognition (CVPR) 2025! |

May 5, 2025 |

| • |

ICRA'25 We have four papers at the IEEE International Conference on Robotics and Automation (ICRA). See you all in Atlanta! |

Feb. 20, 2025 |

| • |

WACV'25 Our work "Fine-Tuning Image-Conditional Diffusion Models is Easier than You Think" has been accepted at WACV'25. |

Nov. 18, 2024 |

Recent Publications

Block-Sparse Global Attention for Efficient Multi-View Geometry Transformers IEEE Conference on Computer Vision and Pattern Recognition (CVPR) 2026 Efficient and accurate feed-forward multi-view reconstruction has long been an important task in computer vision. Recent transformer-based models like VGGT, $\pi^3$ and MapAnything have demonstrated remarkable performance with relatively simple architectures. However, their scalability is fundamentally constrained by the quadratic complexity of global attention, which imposes a significant runtime bottleneck when processing large image sets. In this work, we empirically analyze the global attention matrix of these models and observe that the probability mass concentrates on a small subset of patch-patch interactions corresponding to cross-view geometric correspondences. Building on this insight and inspired by recent advances in large language models, we propose a training-free, block-sparse replacement for dense global attention, implemented with highly optimized kernels. Our method accelerates inference by more than 3x while maintaining comparable task performance. Evaluations on a comprehensive suite of multi-view benchmarks demonstrate that our approach seamlessly integrates into existing global attention-based architectures such as VGGT, $\pi^3$, and MapAnything, while substantiallyimproving scalability to large image collections.

|

VidEoMT: Your ViT is Secretly Also a Video Segmentation Model IEEE Conference on Computer Vision and Pattern Recognition (CVPR) 2026 Existing online video segmentation models typically combine a per-frame segmenter with complex specialized tracking modules. While effective, these modules introduce significant architectural complexity and computational overhead. Recent studies suggest that plain Vision Transformer (ViT) encoders, when scaled with sufficient capacity and large-scale pre-training, can conduct accurate image segmentation without requiring specialized modules. Motivated by this observation, we propose the Video Encoder-only Mask Transformer (VidEoMT), a simple encoder-only video segmentation model that eliminates the need for dedicated tracking modules. To enable temporal modeling in an encoder-only ViT, VidEoMT introduces a lightweight query propagation mechanism that carries information across frames by reusing queries from the previous frame. To balance this with adaptability to new content, it employs a query fusion strategy that combines the propagated queries with a set of temporally-agnostic learned queries. As a result, VidEoMT attains the benefits of a tracker without added complexity, achieving competitive accuracy while being 5x--10x faster, running at up to 160 FPS with a ViT-L backbone.

|

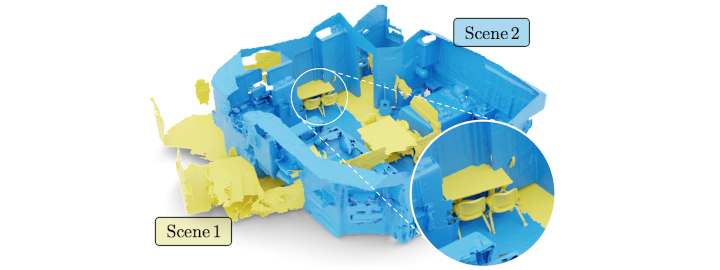

DINO in the Room: Leveraging 2D Foundation Models for 3D Segmentation 2026 International Conference on 3D Vision (3DV) Vision foundation models (VFMs) trained on large-scale image datasets provide high-quality features that have significantly advanced 2D visual recognition. However, their potential in 3D scene segmentation remains largely untapped, despite the common availability of 2D images alongside 3D point cloud datasets. While significant research has been dedicated to 2D-3D fusion, recent state-of-the-art 3D methods predominantly focus on 3D data, leaving the integration of VFMs into 3D models underexplored. In this work, we challenge this trend by introducing DITR, a generally applicable approach that extracts 2D foundation model features, projects them to 3D, and finally injects them into a 3D point cloud segmentation model. DITR achieves state-of-the-art results on both indoor and outdoor 3D semantic segmentation benchmarks. To enable the use of VFMs even when images are unavailable during inference, we additionally propose to pretrain 3D models by distilling 2D foundation models. By initializing the 3D backbone with knowledge distilled from 2D VFMs, we create a strong basis for downstream 3D segmentation tasks, ultimately boosting performance across various datasets.

|